Indoor location, location, location

A CMU team and a CMU spinout took first and second place, respectively, at the 2018 Microsoft Indoor Localization Competition.

There is a high demand from industry for effective and intuitive methods of indoor localization—essentially the indoor equivalent of GPS. The ability to precisely pinpoint your own location inside a building has great implications for augmented reality (AR), social networking, manufacturing, medicine, transportation, tourism and hospitality services, and much more. That’s why since 2014, Microsoft has hosted its annual Indoor Localization Competition, with teams applying indoor localization technologies in unfamiliar environments to compete for the most accurate location readings.

In April, 25 teams met within the Palácio da Bolsa in Porto, Portugal for the fifth annual contest. At the end of the event, CMU students walked away with first and second place, beating out competitors from industry and academia.

AR especially is an extremely fast-growing field that requires cutting-edge localization at its foundation.

Patrick Lazik, Alumnus, Ph.D. ’17, Electrical and Computer Engineering, Carnegie Mellon University

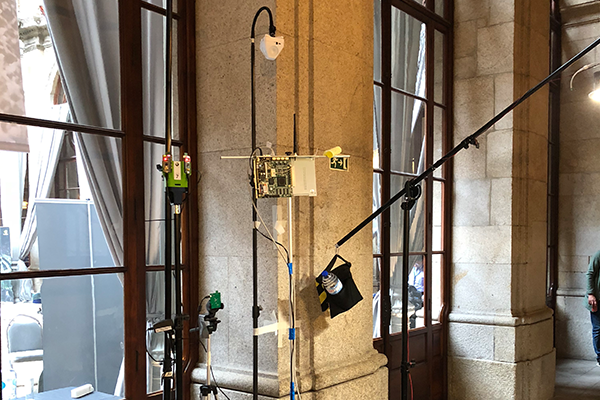

This year, contestants were separated into two categories and judged by their devices’ ability to report their location in either two or three dimensions. Both CMU teams placed in the 3-D category, where teams could deploy 10 beacons throughout the test area as part of their systems. Each device traversed an area of the palace’s first and second floors, as well as the large staircase connecting them, and reported their location as they followed the predetermined path. Their readings were then compared against ground truth readings from a LiDAR sensor, a highly accurate, but expensive system, which uses reflected light beams to measure exact position in a method similar to radar. This was used to calculate average location error, with the teams achieving the lowest error deemed the most accurate.

The competition was tough, and the palace contributed its own unique challenges.

“Our ultrasound-based system faced extremely high multipath interference (very long acoustic reverberation, i.e. echoes) due to the stone construction of the space with no sound absorbing materials,” says Patrick Lazik, a Ph.D. candidate and a member of Yodel Labs, a small CMU startup. “The multistory layout of the space also made surveying the locations of our beacons difficult.”

Lazik and the Yodel Labs team placed second in the competition, while first place went to Rajagopal and the team from CMU. Rajagopal and Lazik are seasoned competitors, having been on the CMU team that won first place in the 2015 contest. They have remained at the leading edge of indoor localization technology, although much has changed since their last win.

“In 2015, the systems at the competition were much more exploratory, and people were trying all sorts of approaches,” Rajagopal notes. “While this was exciting and chaotic, the latest version of the competition is much more refined, with more presence from startups. We have also seen most teams converge on technologies like UWB [ultra-wideband], acoustic, or ultrasonic beacons.”

Electrical and Computer Engineering (ECE) Associate Professor Anthony Rowe served as an advisor for the winning teams in 2018 and 2015. He also served as an organizer of the event, which is sponsored by Microsoft Research and Bosch.

The winners

1st Place — Realty and Reality: Where Location Matters

Source: Carnegie Mellon University College of Engineering

The team includes ECE Ph.D. candidate Niranjini Rajagopal, ECE Ph.D. student John Miller, ECE MS student Krishna Kumar, and CyLab Postdoctoral Researcher Anh Luong.

CMU captured first place once again with an average location error of only 27 centimeters by fusing ultra-wideband (UWB) radio wave and visual inertial odometry (VIO) technology. While thrilled with their win, the team is already looking ahead, improving their technology to create solutions that are flexible and user-friendly enough for widespread deployment.

“A practical system should not require any explicit mapping of beacons or expertise in deploying beacons,” says Niranjini Rajagopal. “These beaconing technologies might end up being embedded in devices deployed indoors such as WiFi routers, phones, or IoT devices. A network of these devices together would be able to provide an accurate localization service.”

The team is already integrating their technology into several projects. They developed a pilot version of a multi-user AR system that won the Best Demo Award at the 2018 Information Processing in Sensor Networks (IPSN) Conference. Their other current project, funded by the National Institute of Standards and Technology (NIST), centers around creating an effective method of infrastructure-free (without mounted beacons or sensors) localization for firefighters using sensors already integrated into their equipment and devices.

The team includes ECE Ph.D. candidate Niranjini Rajagopal, ECE Ph.D. student John Miller, ECE MS student Krishna Kumar, and CyLab Postdoctoral Researcher Anh Luong.

2nd Place — ALPS: The Acoustic Location Processing System

Yodel Labs, a spinout from CMU's acoustic location processing system (ALPS) technology ALPS, which took first place at the 2015 competition, won second this year. ALPS uses ultrasound waves, Bluetooth signals, and (VIO) in custom beacons that work with off-the-shelf smartphones and tablets. This earned them the title of Best Phone-Based System and second place overall, with an average location error of just 47 centimeters.

Yodel is commercializing their technology, using a National Science Foundation (NSF) Small Business Innovation Research (SBIR) grant of $250,000 to improve on methods to automatically localize their custom beacons in preparation for mass production. The team’s ultimate goal is to deploy their localization technology to enable multi-user, persistent AR across large areas.

“For us, the most interesting applications are the ones that require high accuracy, such as multi-user augmented reality and next generation assistive technologies like navigation systems for the visually impaired,” says Lazik. “AR especially is an extremely fast-growing field that requires cutting-edge localization at its foundation.”

Yodel team members include Patrick Lazik (ECE, Ph.D. ’17) and Nick Wilkerson (ECE, M.S. 2014)